Meta Pioneers Just-in-Time Testing, Revolutionizing Software Quality with AI-Driven Bug Detection

Meta has unveiled a groundbreaking Just-in-Time (JiT) testing methodology that dynamically generates tests during the code review process, marking a significant departure from conventional, manually sustained test suites. This innovative approach, detailed in a Meta engineering blog post and accompanying research, has demonstrated a remarkable four-fold improvement in bug detection within AI-assisted development environments. The shift underscores a pivotal moment in software engineering, where the sheer volume and velocity of AI-generated code necessitate a radical rethinking of quality assurance paradigms.

The Shifting Landscape of Software Development and the Demise of Traditional Testing

The impetus for Meta’s JiT testing stems directly from the accelerating adoption of "agentic workflows," where artificial intelligence systems are increasingly responsible for generating, modifying, and iterating upon vast sections of codebase. In such a high-velocity, high-volume environment, the limitations of traditional software testing methods have become acutely apparent. Historically, quality assurance has relied on extensive, pre-written test suites designed to validate code against known requirements and expected behaviors. These suites, often meticulously crafted and maintained by human engineers, form the backbone of software reliability.

However, the advent of sophisticated AI code generation tools has introduced unprecedented challenges. The speed at which AI can produce code far outstrips the human capacity to write and maintain corresponding test cases. Traditional test suites become brittle, their assertions quickly rendered outdated by rapid code evolution, and their coverage struggles to keep pace with the dynamic nature of AI-driven changes. The overhead associated with updating and managing these legacy tests spirals, leading to reduced effectiveness and an increased risk of undetected regressions. This dilemma has prompted a critical need for testing solutions that can adapt instantly and intelligently to ever-changing codebases.

Ankit K., an ICT Systems Test Engineer, succinctly captured this inevitability, observing, "AI generating code and tests faster than humans can maintain them makes JiT testing almost inevitable." This sentiment resonates across the industry, highlighting a growing consensus that the traditional model is no longer sustainable for modern, AI-augmented development. Large technology companies like Meta, with their massive codebases and rapid development cycles, are at the forefront of experiencing these pressures, making them ideal incubators for such transformative solutions.

Understanding the Mechanics of Just-in-Time (JiT) Testing

JiT testing directly confronts these challenges by integrating test generation into the very fabric of the development workflow, specifically at the pull request (PR) stage. Instead of relying on a static, pre-existing suite, the system dynamically generates tests tailored to the specific code modifications (the "diff") being proposed. This is not merely about validating existing functionality; it’s about anticipating potential failure modes and constructing targeted tests designed to expose regressions introduced by the new changes.

The core objective of JiT tests is to act as "regression-catching" mechanisms. They are engineered to fail if the proposed changes introduce a bug, but pass on the original, parent revision of the code. This precise targeting ensures that developers receive immediate, relevant feedback on the stability of their modifications. The underlying pipeline that powers this intelligent test generation is a sophisticated amalgamation of several advanced technologies:

- Large Language Models (LLMs): These powerful AI models are crucial for understanding the semantic intent behind code changes and generating human-like test descriptions or actual test code.

- Program Analysis: Static and dynamic analysis techniques are employed to scrutinize the code diff, identify potential risk areas, understand data flow, and pinpoint sections of code that are most likely to be affected by the proposed changes.

- Mutation Testing: A key component, mutation testing involves injecting synthetic defects (mutations) into the code to simulate realistic failure scenarios. The system then validates whether the newly generated tests are robust enough to detect these injected faults. If a test fails to catch an intentionally introduced bug, it indicates a weakness in the test’s design or coverage, prompting further refinement.

Mark Harman, a Research Scientist at Meta, underscored the philosophical shift inherent in this approach, stating, "This work represents a fundamental shift from ‘hardening’ tests that pass today to ‘catching’ tests that find tomorrow’s bugs." This statement encapsulates the proactive, future-oriented nature of JiT testing, moving beyond mere validation to active bug discovery.

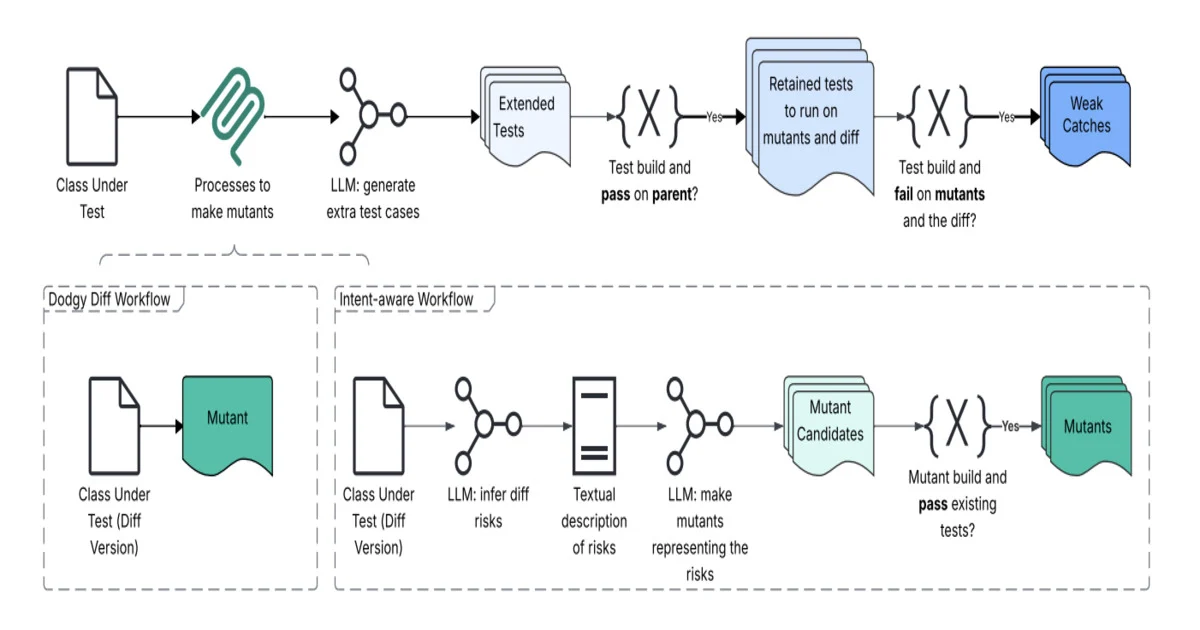

The Architecture Behind the Innovation: Dodgy Diff and Intent-Aware Workflows

At the heart of Meta’s JiT testing framework lies a sophisticated architecture dubbed "Dodgy Diff and intent-aware workflow." This system re-conceptualizes a code change not merely as a textual difference between two versions of code, but as a rich semantic signal loaded with behavioral intent and potential risk.

The process unfolds in several intricate steps:

- Semantic Diff Analysis: The system first analyzes the code diff to extract its underlying behavioral intent. This goes beyond simple line-by-line comparison, delving into what the developer intends the code to do and how it might impact existing functionality. It also identifies areas of heightened risk based on the nature of the changes (e.g., modifications to critical paths, complex logic, or shared libraries).

- Intent Reconstruction and Change-Risk Modeling: Based on the semantic analysis, the system reconstructs the developer’s likely intent and builds a comprehensive model of the risks associated with the change. This involves predicting what could potentially break or lead to unexpected behavior as a direct consequence of the proposed modifications.

- Mutation Engine and "Dodgy" Variant Generation: The identified risk areas and inferred intent feed into a powerful mutation engine. This engine systematically generates "dodgy" variants of the code—versions with subtle, synthetic defects injected at strategic points. These mutations simulate realistic failure scenarios that a developer might inadvertently introduce. For instance, a mutation might change an operator (e.g.,

>to>=), modify a variable assignment, or alter a conditional statement. - LLM-Based Test Synthesis: Leveraging Large Language Models, a dedicated test synthesis layer then generates new test cases. These tests are carefully aligned with the inferred developer intent and are specifically designed to expose the "dodgy" variants. The goal is to create tests that would fail if the actual code behaved like one of the mutated versions, thereby proving their effectiveness in catching real bugs.

- Filtering and Prioritization: Before surfacing the results to the developer, a crucial filtering mechanism prunes noisy or low-value tests. This ensures that developers are presented only with highly relevant, actionable test failures, preventing information overload and maintaining focus on critical issues. The most pertinent test results are then seamlessly integrated into the pull request review interface, providing immediate feedback.

This multi-faceted approach, illustrated by the "Dodgy diff" architecture, represents a profound leap in automated testing, moving from reactive validation to proactive, intelligent fault detection.

Empirical Validation and Promising Results

Meta’s JiT testing system has not merely been theorized; it has undergone extensive empirical evaluation. The company reported testing the system on a massive scale, involving over 22,000 generated tests. The results unequivocally demonstrate the efficacy of the approach:

- 4x Improvement in Bug Detection: Compared to baseline-generated tests (which likely represent earlier, less sophisticated automated test generation methods or simpler heuristics), JiT testing achieved approximately a four-fold increase in its ability to detect bugs. This significant uplift speaks volumes about the precision and intelligence of the new methodology.

- 20x Improvement in Meaningful Failure Detection: Beyond simply detecting bugs, the system showed up to a 20-fold improvement in detecting "meaningful failures" compared to coincidental outcomes. This distinction is critical; it implies that the JiT system isn’t just flagging trivial issues but is pinpointing bugs that genuinely impact software functionality and reliability.

- Identification of Real Defects with Production Impact: In one specific evaluation subset, the JiT system identified 41 distinct issues. Crucially, 8 of these were confirmed as real, legitimate defects. Several of these identified bugs had the potential for significant production impact, underscoring the system’s ability to prevent costly outages or performance degradations.

These statistics are not just numbers; they represent tangible improvements in software quality, reducing the likelihood of critical bugs reaching production and enhancing the overall stability of Meta’s vast array of applications and services. The ability to catch these regressions early in the development cycle, even before human reviewers might spot them, translates into substantial savings in time, resources, and reputation.

Mark Harman, in another LinkedIn post, highlighted the broader significance of mutation testing, a foundational element of JiT: "Mutation testing, after decades of purely intellectual impact, confined to academic circles, is finally breaking out into industry and transforming practical, scalable Software Testing 2.0." This statement positions Meta’s innovation as a catalyst for a new era in software quality assurance, where academic rigor meets industrial scale.

The Broader Implications for Software Development and the Future of Testing

The introduction of JiT testing by a tech giant like Meta carries profound implications for the entire software development industry.

1. Enhanced Software Quality and Reliability: The most immediate and evident impact is the potential for significantly higher software quality. By catching serious, unexpected bugs at the pull request stage, JiT testing prevents them from propagating further into the development pipeline or, worse, into production environments. This translates to more stable applications, fewer user-facing issues, and ultimately, greater user satisfaction.

2. Accelerated Development Cycles and Increased Productivity: Traditional testing often involves significant delays as developers wait for extensive test suites to run or for human testers to manually verify changes. JiT testing, by generating and executing relevant tests instantaneously, streamlines the code review process. This allows developers to receive immediate feedback, iterate faster, and merge code with greater confidence, thereby accelerating development cycles and boosting overall team productivity. The reduction in maintenance overhead for brittle, long-lived test suites also frees up developer resources.

3. Evolution of Developer and QA Roles: The rise of AI-driven testing paradigms like JiT will undoubtedly reshape the roles of software engineers and quality assurance professionals. While machines will increasingly handle the grunt work of test generation and execution, human expertise will become even more critical in higher-level tasks:

- Designing robust systems: Ensuring the AI testing agents are properly configured and guided.

- Analyzing complex failures: Interpreting sophisticated test results and debugging nuanced issues that AI might flag but not fully explain.

- Exploring edge cases: Identifying novel scenarios that even an intelligent test generator might miss.

- Focus on exploratory testing and strategic quality initiatives: Shifting from repetitive manual testing to more creative, high-value quality assurance activities.

4. The Dawn of "Software Testing 2.0": As Mark Harman suggests, JiT testing, particularly its reliance on mutation testing and advanced AI, signals a transition to "Software Testing 2.0." This new paradigm is characterized by:

- Change-specific fault detection: Moving away from static correctness validation to dynamic, context-aware bug discovery.

- Reduced brittleness: Tests adapt automatically as code evolves, eliminating the common problem of outdated or fragile test suites.

- Human-machine collaboration: Effort shifts from humans maintaining tests to machines generating them, with humans intervening only when meaningful issues are surfaced.

5. Broader Industry Adoption and Challenges: While Meta’s success is a powerful proof point, widespread adoption across the industry will face challenges. Implementing a sophisticated JiT system requires significant investment in AI research, large language models, program analysis tools, and a robust engineering infrastructure. Smaller companies may find the initial barrier to entry high. However, as these technologies mature and become more accessible, perhaps through open-source initiatives or cloud-based services, JiT principles are likely to permeate modern software development practices. The need for specialized expertise in AI and testing will also grow.

Conclusion

Meta’s pioneering work in Just-in-Time testing represents a landmark achievement in software engineering. By harnessing the power of artificial intelligence, particularly large language models, program analysis, and mutation testing, Meta has engineered a system that not only detects bugs with unprecedented efficiency but also fundamentally redefines the relationship between code changes and quality assurance. This move from "hardening" to "catching" tests marks a crucial evolution, transforming software testing from a bottleneck into an accelerator for innovation. As AI continues to reshape the landscape of software development, intelligent, adaptive testing solutions like JiT will be indispensable in ensuring the reliability, security, and quality of the digital world we build. The future of software quality is increasingly dynamic, automated, and deeply intertwined with the advancements in artificial intelligence.