Philanthropist Jeff Atwood and Lt. Col. Alexander Vindman Advocate for Shared American Dream with $50 Million Guaranteed Minimum Income Initiative

A compelling vision for the future of the American Dream was unveiled at Cooper Union’s historic Great Hall in New York City, where prominent technologist and philanthropist Jeff Atwood, joined by Lieutenant Colonel (Ret.) Alexander Vindman, delivered a passionate address on January 7th. The event served as a platform to introduce "Stay Gold, America," an ambitious initiative centered on a multi-million dollar philanthropic pledge and a groundbreaking pilot program for Guaranteed Minimum Income (GMI) in underserved rural American communities. Atwood’s speech, derived from a widely shared blog post, emphasized the critical need for a collective recommitment to the foundational values of opportunity and shared prosperity, arguing that the American Dream remains incomplete until accessible to all.

Reclaiming the Original Vision of the American Dream

Atwood commenced his address by revisiting the foundational definition of the American Dream, first articulated by historian James Truslow Adams in 1931 amidst the throes of the Great Depression. Adams described it as "a land in which life should be better and richer and fuller for everyone, with opportunity for each according to ability or achievement… not a dream of motor cars and high wages merely, but a dream of social order in which [everyone] shall be able to attain to the fullest stature of which they are innately capable, and be recognized by others for what they are, regardless of the fortuitous circumstances of birth or position."

This expansive, inclusive definition, Atwood contended, stands in stark contrast to contemporary perceptions often narrowed to material success. Driven by a desire to understand what elements of this dream still resonated with Americans today and to make sense of the nation’s current trajectory, Atwood embarked on a personal quest. He began writing what he described as the most challenging piece of his blogging career, soliciting diverse perspectives from Americans on the personal meaning of the American Dream.

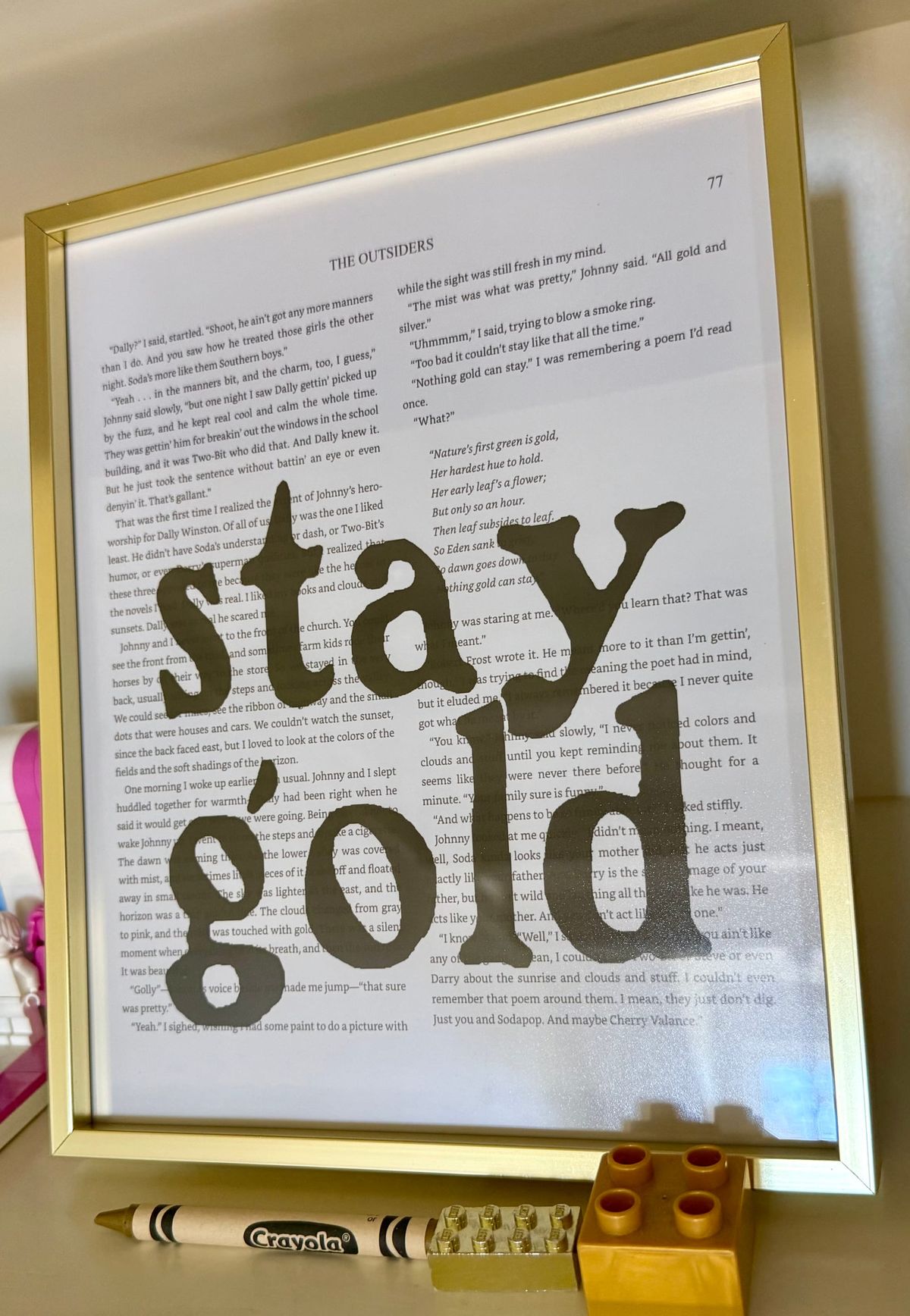

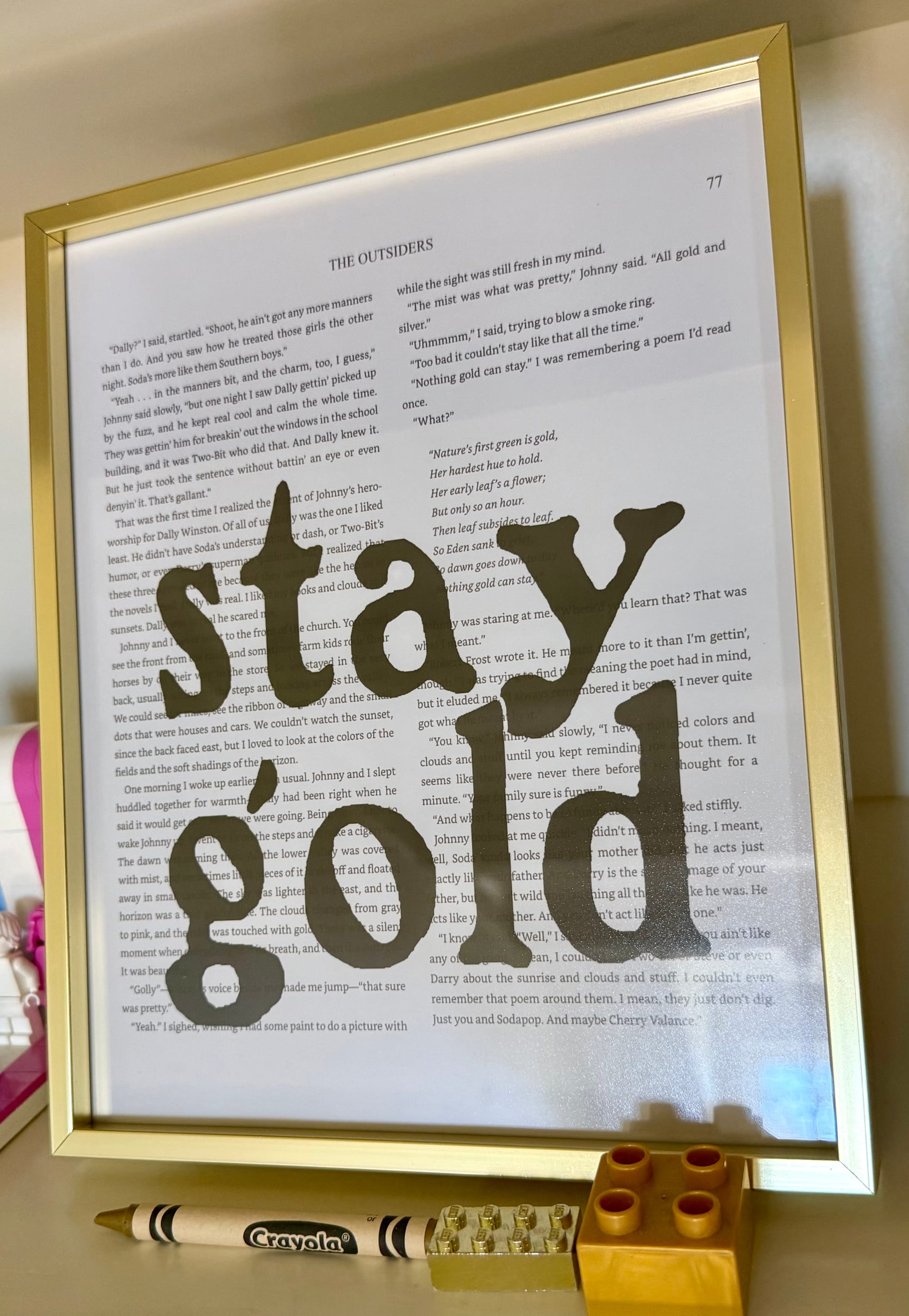

A pivotal moment in his understanding came during a high school theater performance of S.E. Hinton’s "The Outsiders." While familiar with the iconic phrase "stay gold" from the 1983 film adaptation, witnessing the full narrative unfolded by teenage actors among his neighbors illuminated its deeper meaning for Atwood: the imperative of sharing the American Dream. This realization formed the bedrock of his essay, "Stay Gold, America," and the subsequent "Pledge to Share the American Dream."

A Two-Pronged Philanthropic Commitment

The "Pledge to Share the American Dream" outlines both immediate and long-term commitments from Atwood’s family. In the short term, the family has made eight $1 million donations to a diverse array of non-profit organizations. These include Team Rubicon (disaster relief), Children’s Hunger Fund (food security), PEN America (literary freedom), The Trevor Project (LGBTQ+ youth crisis intervention), NAACP Legal Defense and Educational Fund (racial justice), First Generation Investors (financial literacy for underserved youth), Global Refuge (refugee support), and Planned Parenthood (reproductive healthcare).

Beyond these social impact organizations, the pledge also included significant investments in bolstering America’s technical infrastructure. Additional $1 million donations were made to entities such as Wikipedia, The Internet Archive, The Common Crawl Foundation, Let’s Encrypt, pioneering independent internet journalism, and several other critical open-source software projects that underpin much of the modern digital world. Atwood encouraged all Americans to contribute to organizations effectively assisting those in need, emphasizing the immediate necessity of addressing acute challenges.

However, Atwood stressed that short-term fixes are insufficient. The second, more ambitious act of the pledge involves a deeper, long-term commitment: over the next five years, Atwood’s family will dedicate half of their remaining wealth to foundational efforts aimed at ensuring all Americans have equitable access to the American Dream. This long-term strategy, he acknowledged, will span decades and require sustained effort.

Personal Journey and the Erosion of Opportunity

Atwood shared his own challenging path to the American Dream, recounting his parents’ origins in deep poverty in rural West Virginia and North Carolina. Despite humble beginnings, their eventual ascent to the lower middle class in Virginia, coupled with their unconditional love, provided the essential foundation for his success. He highlighted the transformative power of a solid public education in Chesterfield County, Virginia, and the invaluable privilege of an affordable state education at the University of Virginia—an institution deeply rooted in Thomas Jefferson’s ideals.

Jefferson, a man Atwood described as a "living paradox" trapped in the values of his era, nonetheless penned the revolutionary words "Life, liberty, and the pursuit of happiness" into the Declaration of Independence. These words, Atwood asserted, define America’s fundamental shared values, even if the nation has not always lived up to them. He underscored that the American Dream is not about individual, isolated success, but about collective achievement and mutual connection.

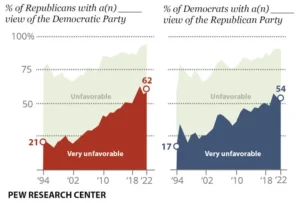

Atwood expressed long-standing concern over wealth concentration in America, citing a 2012 video by Politizane that starkly illustrated its extent. This concern intensified as he recognized alarming parallels with the late 19th-century American Gilded Age, a term coined by historians referencing Mark Twain’s 1873 novel. The First Gilded Age was characterized by rapid industrialization, vast wealth accumulation by a few, hazardous working conditions, and violent labor disputes like the Homestead Strike of 1892, where workers clashed with Pinkerton guards. Thousands died due to negligible safety regulations, as employers prioritized profit over human welfare.

The "Second Gilded Age": A Crisis of Access

In January 2025, while drafting his essay, Atwood noted that America officially entered a period of wealth concentration surpassing any previous era in its history. Citing 2021 data, the top 1% of households controlled 32% of all wealth, while the bottom 50% held a mere 2.6%. He emphasized that this disparity has only worsened in the intervening years. "We can no longer say ‘Gilded Age.’ We must now say ‘The First Gilded Age,’" Atwood declared, asserting that the nation is currently experiencing its "second Gilded Age."

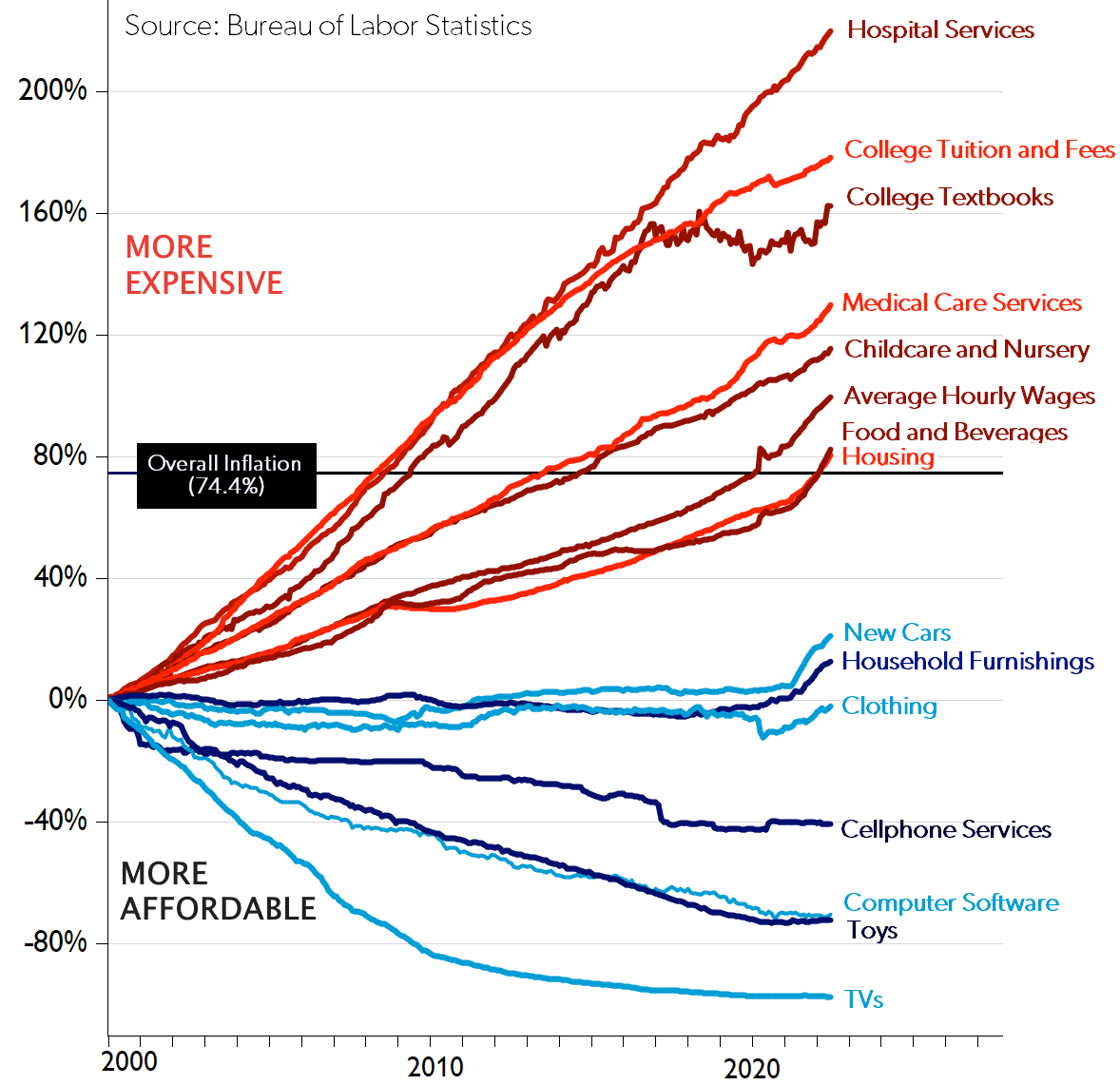

In this contemporary iteration, the path to the American Dream is increasingly obstructed for many. Unaffordable education, inaccessible healthcare, and a severe lack of affordable housing trap millions in cycles of debt and instability. "They have no stable foundation to build their lives," Atwood lamented. "They watch desperately, working as hard as they can, while life simply passes them by, without even the freedom to choose their own lives." This crisis, he argued, represents a profound betrayal of the promise of opportunity extended at the nation’s founding.

Guaranteed Minimum Income: A Path Less Traveled

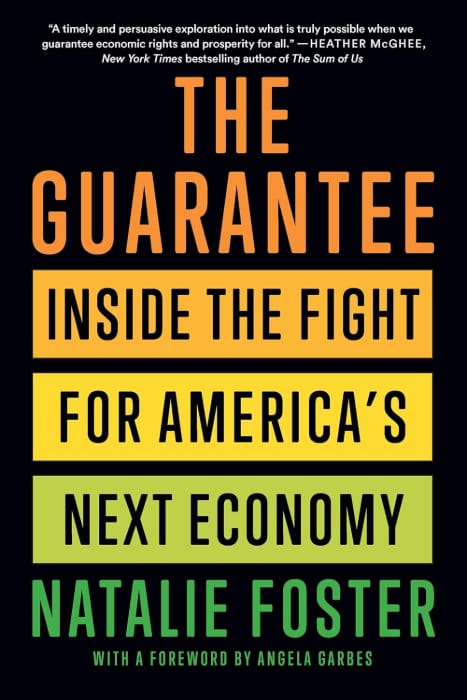

Acknowledging the complexity of these challenges, Atwood proposed a foundational solution: a Guaranteed Minimum Income (GMI), advocated by Natalie Foster, co-founder of the Economic Security Project. The concept, while seemingly novel to some, has deep historical roots in American discourse and policy.

A Chronology of Guaranteed Income Concepts in America:

- 1797: Thomas Paine’s "Agrarian Justice": The revolutionary thinker proposed a progressive estate tax to fund a national pension for the elderly and a lump-sum payment for young adults, intended to compensate citizens for the loss of common lands. While not implemented, it was an early articulation of wealth redistribution for social security.

- 1935: Social Security Act: Born from the economic devastation of the Great Depression, President Franklin D. Roosevelt’s New Deal programs sought to provide economic security. Social Security, providing guaranteed income for retirees, proved immensely successful. Before its implementation, half of American seniors lived in poverty; today, that figure is approximately 10%.

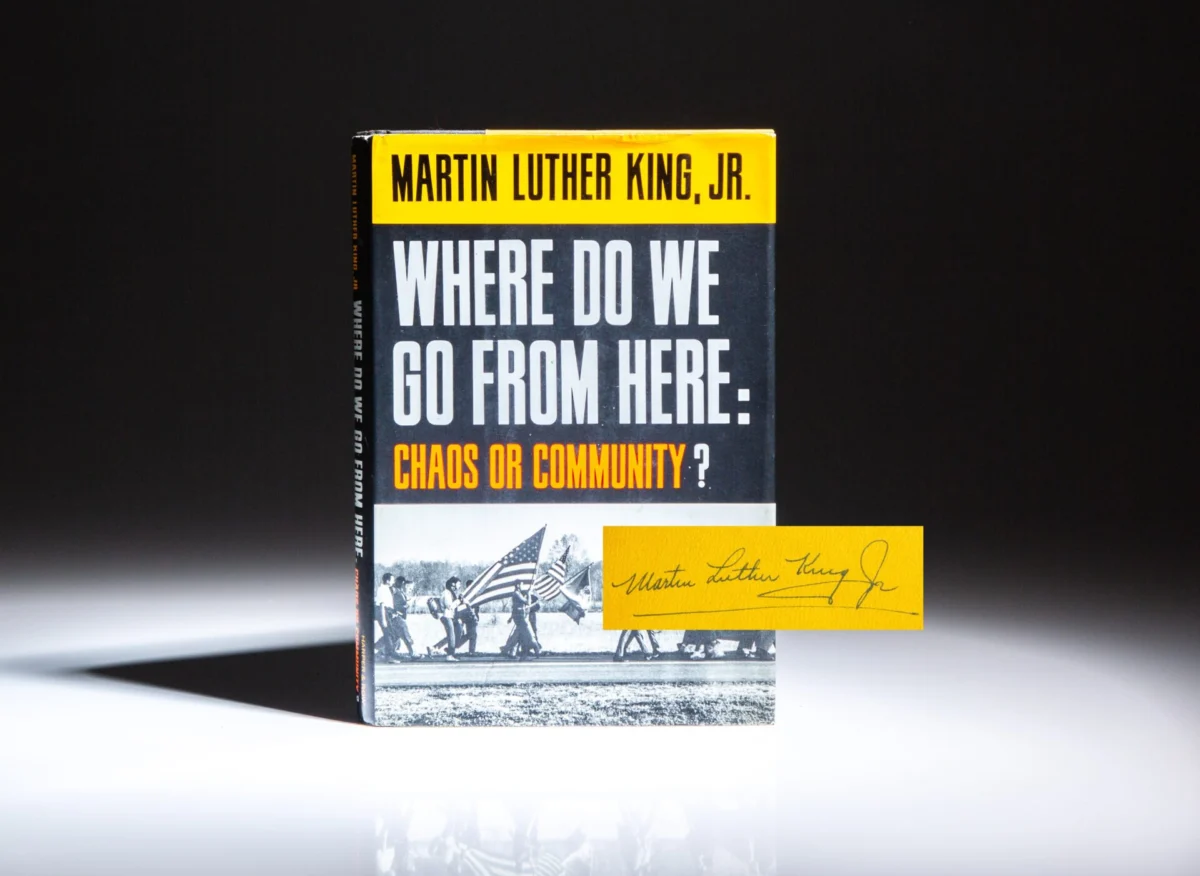

- 1967: Martin Luther King Jr.’s "Where Do We Go From Here: Chaos or Community?": Dr. King made a powerful moral case for a form of Universal Basic Income (UBI), believing economic insecurity to be the root of all inequality. He argued that direct cash disbursements were the simplest and most effective way to combat poverty and empower individuals.

- 1972: Supplemental Security Income (SSI): Congress established this program to provide direct cash assistance to low-income elderly, blind, and disabled individuals with little or no income. As of January 2025, over 7.3 million Americans rely on SSI for essentials like food, housing, and medical expenses.

- 1975: Earned Income Tax Credit (EITC): Introduced by the Tax Reduction Act, the EITC is a refundable tax credit for low-to-moderate-income working individuals and couples, particularly those with children. It incentivizes work and significantly boosts the income of low-wage earners. In 2023, it lifted approximately 6.4 million people, including 3.4 million children, out of poverty, making it the second most effective anti-poverty tool after Social Security, according to the Census Bureau.

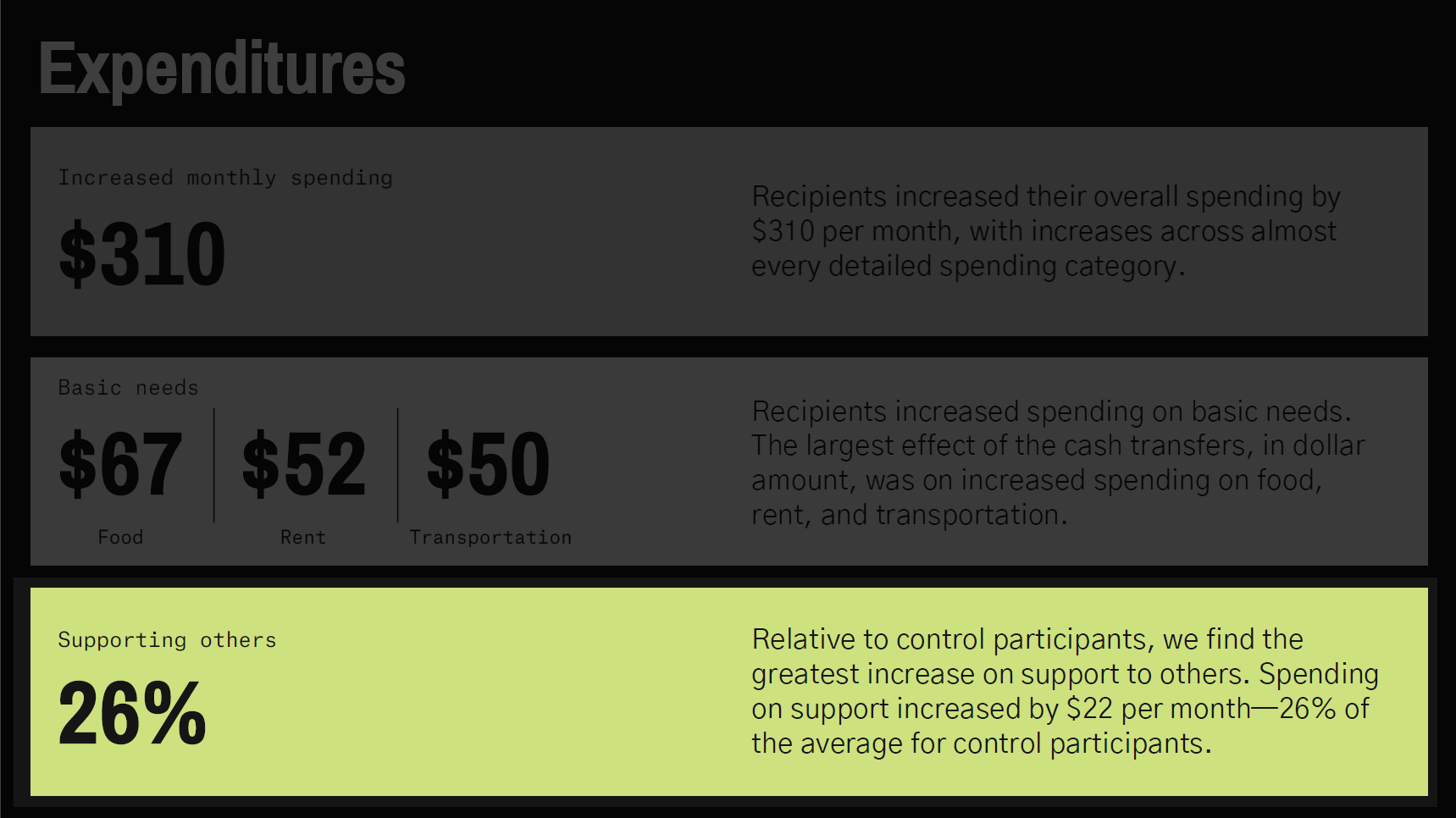

- 2019: Stockton Economic Empowerment Demonstration (SEED): Directly inspired by King’s vision, then-26-year-old Mayor Michael Tubbs launched this $3 million initiative in Stockton, California. It provided 125 residents with $500 per month in unconditional cash payments for two years. The program’s findings were overwhelmingly positive, demonstrating improved financial stability, increased full-time employment, and enhanced well-being among recipients.

Atwood referenced Robert Frost’s poem "The Road Not Taken," suggesting that GMI represents the "path less traveled by" in addressing economic insecurity—a simpler, more practical, and scalable approach with minimal bureaucracy.

A New GMI Initiative in Rural America

To implement this vision, Atwood’s family is partnering with GiveDirectly, a leader in direct cash transfer programs with extensive GMI studies in the U.S., and OpenResearch, which recently completed the largest and most detailed GMI study in the country in 2023. Together, they will launch a new Guaranteed Minimum Income initiative specifically targeting rural American communities.

The focus on rural areas is strategic and informed by data. Rural counties consistently exhibit higher poverty rates, fewer job opportunities, lower wages, and reduced access to essential services like healthcare and education. Long-standing pockets of extreme poverty exist in regions like Appalachia, the Mississippi Delta, and American Indian reservations, with some counties experiencing poverty rates as high as 55.8% (Oglala Lakota, SD) or 37.6% (McDowell, WV). Urban areas rarely see such severe numbers, highlighting the amplified challenges faced by the rural poor. Furthermore, rural areas offer smaller populations, making them ideal for tightly controlled scientific studies that can be carefully scaled and refined for broader application.

The initiative will involve working with existing local groups to coordinate opt-in GMI studies. Outreach and mentorship will be provided to participants, emphasizing a collaborative "teamwork between Americans" approach. Veterans, recognized for their leadership skills and community commitment, are envisioned to play a crucial role in supporting and executing these GMI programs, ensuring they align with core American values of self-reliance and community service. Partnerships with established community organizations—churches, civic groups, community colleges, and local businesses—will integrate the GMI studies with existing support systems, fostering synergy rather than creating parallel structures.

GiveDirectly and OpenResearch will build upon their extensive work, meticulously gathering data on key metrics such as employment, entrepreneurship, education, health, and community engagement. Regular interviews with participants will provide qualitative insights, fostering an iterative approach to program improvement.

Implications: Economic Security as the Bedrock of Democracy

Atwood passionately argued that economic security is not merely about individual well-being but serves as the bedrock of democracy itself. When individuals are freed from the constant struggle for basic needs—food, shelter, healthcare—they gain "room to breathe" and true freedom: the freedom to raise children, start businesses, choose careers, volunteer, and crucially, to vote and engage meaningfully in civic life.

He stressed that this initiative transcends ideology or government programs; it is about Americans collectively investing in their future and unlocking unprecedented human potential. Drawing from existing study data, Atwood affirmed the transformative power of even small amounts of money for those in poverty, enabling them to move beyond mere survival and realize their inherent capabilities.

The GMI, Atwood concluded, represents a long-term investment in America’s future, aligning with the aspirational principles enshrined in the Declaration of Independence. He emphasized that democracy is designed to be malleable, capable of adaptation and improvement to better serve its people.

A Call for Activism and Shared Knowledge: The Legacy of Aaron Swartz

In his closing remarks, Atwood invoked the legacy of Aaron Swartz, a precocious programmer and activist who co-developed RSS web feeds and Reddit, and championed open access to information. Swartz believed that publicly funded knowledge, such as court documents from PACER or academic articles from JSTOR, should be freely accessible to all. His attempts to liberate this information led to his arrest and aggressive federal prosecution, which ultimately contributed to his tragic suicide at age 26.

Swartz’s story, Atwood argued, exemplifies choosing to build the public good despite risks, embodying the spirit of activism for defining American principles. He urged his audience to embrace this spirit: "I think we should all choose to be activists, to be brave, to stand up for our defining American principles."

Atwood concluded by reiterating two core requests: first, to actively participate in building a more equitable future for America, and second, to support the GMI initiative in rural communities. With his family committing $50 million to this endeavor, he envisioned a future where collective action could amplify this impact manifold. "Decades from now, people will look back and wonder why it took us so long to share our dream of a better, richer, and fuller life with our fellow Americans," he stated. "I believe everyone deserves a fair chance at what was promised when we founded this nation: Life, Liberty, and the pursuit of The American Dream."