A Comprehensive Guide to Fine-Tuning Amazon Nova Models with Data Mixing Using the Nova Forge SDK

This hands-on guide provides a detailed playbook for fine-tuning Amazon Nova models with the Nova Forge SDK. It covers the entire process, from meticulous data preparation and sophisticated data mixing techniques to robust evaluation, offering a repeatable framework adaptable to diverse enterprise AI use cases. This article represents the second installment in the Nova Forge SDK series, building upon the foundational introduction to the SDK and the initial exploration of customization experiments.

The core focus of this guide is data mixing, a pivotal technique that allows for the fine-tuning of models on domain-specific datasets without compromising their inherent general capabilities. Previous discussions have underscored the importance of this approach. By strategically blending proprietary customer data with Amazon-curated datasets, it’s possible to maintain near-baseline performance on broad benchmarks like Massive Multitask Language Understanding (MMLU) while simultaneously achieving substantial improvements, such as a 12-point F1 score enhancement on a Voice of Customer classification task spanning 1,420 leaf categories. In stark contrast, attempts to fine-tune open-source models solely on customer data often result in a significant degradation of general intelligence. This guide demonstrates the practical implementation of this powerful technique.

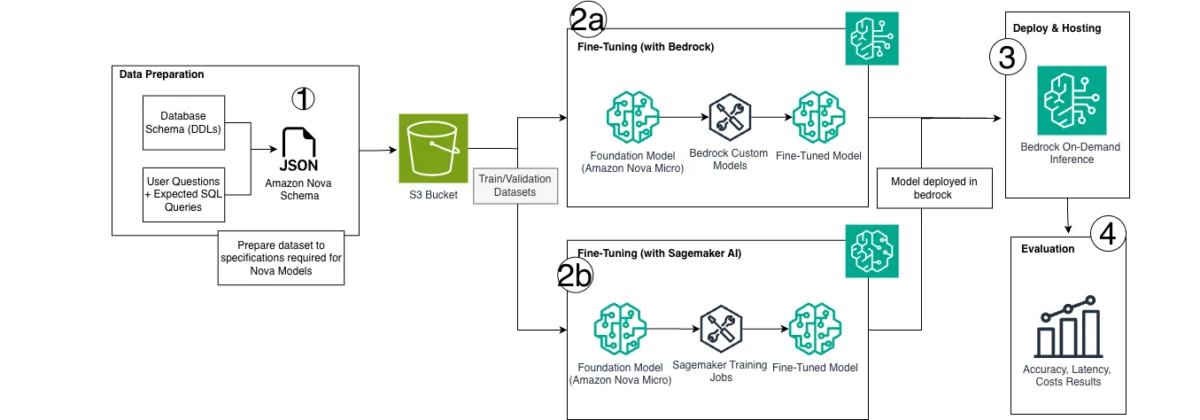

Solution Overview: A Five-Stage Workflow

The process of fine-tuning Amazon Nova models with data mixing, as facilitated by the Nova Forge SDK, can be broadly segmented into five distinct stages:

- SDK Installation and Dependency Management: Ensuring the necessary tools and libraries are correctly installed and configured for the development environment.

- AWS Resource Configuration: Setting up essential cloud infrastructure, including S3 buckets for data storage and defining IAM roles for secure access.

- Data Preparation and Validation: Transforming raw domain-specific data into a format compatible with Nova models, including sanitization and structural validation.

- Training Configuration and Execution with Data Mixing: Orchestrating the fine-tuning process, critically enabling and configuring the data mixing feature, and launching the training job.

- Model Evaluation: Assessing the performance of the fine-tuned model across both domain-specific tasks and general capabilities to ensure a balanced outcome.

Prerequisites for a Successful Fine-Tuning Workflow

Before embarking on this fine-tuning journey, users must ensure they have the following prerequisites in place:

- AWS Account: Access to an Amazon Web Services account with appropriate permissions for SageMaker, S3, and IAM.

- AWS CLI and SDKs: The Amazon Web Services Command Line Interface (AWS CLI) and the AWS SDK for Python (Boto3) installed and configured.

- SageMaker HyperPod CLI Tooling: Essential for managing the distributed training environment.

- Python Environment: A compatible Python environment (e.g., using virtual environments) with necessary libraries.

- Jupyter Notebook Environment: A platform like Jupyter Notebook or JupyterLab for interactive development and execution of code.

Cost Consideration: This comprehensive walkthrough utilizes four ml.p5.48xlarge instances for both training and evaluation. These are high-performance GPU instances designed for demanding AI workloads. It is strongly recommended to commence with a brief test run, setting max_steps to a low value like 5, to validate the configuration before committing to a full-scale training session. For the most current pricing information, users should consult the official Amazon SageMaker pricing page.

Step 1: Installing the Nova Forge SDK and Dependencies

The initial step involves setting up the Nova Forge SDK and its associated dependencies. This is typically accomplished by leveraging the SageMaker HyperPod CLI tooling. Users can download this tooling directly from the Nova Forge S3 distribution bucket, which is provided during the Nova Forge onboarding process. Alternatively, a convenient installer script is available, which automates the installation of dependencies from the private S3 bucket and establishes a dedicated virtual environment for the project.

The following commands illustrate the process of downloading and executing the HyperPod CLI installer script:

# Download the HyperPod CLI Installer from Github (Only applicable for Forge)

curl -O https://github.com/aws-samples/amazon-nova-samples/blob/main/customization/nova-forge-hyperpod-cli-installation/install_hp_cli.sh

# Run the Installer

bash install_hp_cli.shOnce the HyperPod CLI is installed and the virtual environment is activated, the Nova Forge SDK itself needs to be installed. This SDK provides the high-level APIs crucial for data preparation, model training, and evaluation. The installation is straightforward using pip:

pip install --upgrade botocore awscli

pip install amzn-nova-forge

pip install datasets huggingface_hub pandas pyarrowAfter all dependencies are successfully installed, it is essential to activate the virtual environment and configure it as a kernel for use within a Jupyter notebook environment. This ensures that the notebook sessions have access to the correct libraries and configurations.

source ~/hyperpod-cli-venv/bin/activate

pip install ipykernel

python -m ipykernel install --user --name=hyperpod-cli-venv --display-name="Forge (hyperpod-cli-venv)"

jupyter kernelspec listTo verify that the SDK has been installed correctly and is accessible, a simple import statement can be executed:

from amzn_nova_forge import *

print("SDK imported successfully")Step 2: Configuring AWS Resources

Effective fine-tuning requires dedicated cloud resources. The first step in this phase is to create an Amazon S3 bucket. This bucket will serve as the central repository for all training data and model outputs generated during the fine-tuning process. Following the creation of the S3 bucket, it is imperative to grant the HyperPod execution role the necessary permissions to access this bucket. This ensures secure and controlled data flow between the training environment and storage.

The following Python code snippet, using Boto3, demonstrates how to create an S3 bucket and configure its bucket policy to allow access for the HyperPod execution role:

import boto3

import time

import json

TIMESTAMP = int(time.time())

S3_BUCKET = f"nova-forge-customisation-TIMESTAMP"

S3_DATA_PATH = f"s3://S3_BUCKET/demo/input"

S3_OUTPUT_PATH = f"s3://S3_BUCKET/demo/output"

sts = boto3.client("sts")

s3 = boto3.client("s3")

ACCOUNT_ID = sts.get_caller_identity()["Account"]

REGION = boto3.session.Session().region_name

# Create the S3 bucket

if REGION == "us-east-1":

s3.create_bucket(Bucket=S3_BUCKET)

else:

s3.create_bucket(

Bucket=S3_BUCKET,

CreateBucketConfiguration="LocationConstraint": REGION

)

# Grant HyperPod execution role access

HYPERPOD_ROLE_ARN = f"arn:aws:iam::ACCOUNT_ID:role/<your-hyperpod-execution-role>" # Replace with your actual role ARN

bucket_policy =

"Version": "2012-10-17",

"Statement": [

"Sid": "AllowHyperPodAccess",

"Effect": "Allow",

"Principal": "AWS": HYPERPOD_ROLE_ARN,

"Action": ["s3:GetObject", "s3:PutObject", "s3:DeleteObject", "s3:ListBucket"],

"Resource": [

f"arn:aws:s3:::S3_BUCKET",

f"arn:aws:s3:::S3_BUCKET/*"

]

]

s3.put_bucket_policy(Bucket=S3_BUCKET, Policy=json.dumps(bucket_policy))Step 3: Preparing Your Training Dataset

The efficacy of any fine-tuning process hinges on the quality and format of the training data. The Nova Forge SDK is designed to accommodate several common data formats, including JSONL, JSON, and CSV. For this demonstration, the publicly accessible MedReason dataset from Hugging Face is utilized. This dataset comprises approximately 32,700 question-answer pairs, specifically curated to facilitate fine-tuning for domain-specific medical reasoning tasks.

Downloading and Sanitizing the Data

A critical aspect of data preparation for Nova models involves token-level validation. Certain tokens can inadvertently conflict with the model’s internal chat template, particularly special delimiters used to delineate system, user, and assistant turns during training. Literal strings such as System: or Assistant: could be misinterpreted by the model as turn boundaries, thereby corrupting the training signal.

The sanitization process addresses this by introducing a space before the colon (e.g., transforming System: to System :). This subtle modification preserves readability while effectively breaking the pattern match that could lead to misinterpretation. Additionally, special tokens like [EOS] and <image>, which possess reserved meanings within the model’s vocabulary, are stripped to prevent conflicts.

The following Python code outlines the process of downloading the MedReason dataset and applying the necessary sanitization:

from huggingface_hub import hf_hub_download

import pandas as pd

import json

import re

# Download the dataset

jsonl_path = hf_hub_download(

repo_id="UCSC-VLAA/MedReason",

filename="ours_quality_33000.jsonl",

repo_type="dataset",

local_dir="."

)

df = pd.read_json(jsonl_path, lines=True)

# Tokens that conflict with the model's chat template

INVALID_TOKENS = [

"System:", "SYSTEM:", "User:", "USER:", "Bot:", "BOT:",

"Assistant:", "ASSISTANT:", "Thought:", "[EOS]",

"<image>", "<video>", "<unk>",

]

def sanitize_text(text):

for token in INVALID_TOKENS:

if ":" in token:

word = token[:-1]

text = re.sub(rf'bword:', f'word :', text, flags=re.IGNORECASE)

else:

text = text.replace(token, "")

return text.strip()

# Write sanitized JSONL

with open("training_data.jsonl", "w") as f:

for _, row in df.iterrows():

f.write(json.dumps(

"question": sanitize_text(row["question"]),

"answer": sanitize_text(row["answer"]),

) + "n")

print(f"Dataset saved: training_data.jsonl (len(df) examples)")For an additional layer of validation, users can employ a provided script to confirm that their data does not contain any reserved keywords that might interfere with the model’s training. This script can be found at: https://github.com/aws-samples/amazon-nova-samples/tree/main/customization/bedrock-finetuning/understanding/dataset_validation.

Loading, Transforming, and Validating with the SDK

The Nova Forge SDK offers a JSONLDatasetLoader utility that simplifies the conversion of raw data into the precise structure required by Nova models. The transform() method is particularly crucial; it reformats each question-answer pair into the Nova chat template format. This structured, turn-based representation, complete with appropriate role tags and delimiters, is essential for effective training.

Before Transform (Raw JSONL):

"question": "What are the causes of chest pain in a 45-year-old patient?",

"answer": "Chest pain in a 45-year-old can result from cardiac causes such as..."

After Transform (Nova Chat Template Format):

"messages": [

"role": "user", "content": "What are the causes of chest pain in a 45-year-old patient?",

"role": "assistant", "content": "Chest pain in a 45-year-old can result from cardiac causes such as..."

]

Following transformation, the validate() method performs a comprehensive check of the data. This includes verifying the correctness of the chat template structure, ensuring the absence of any remaining invalid tokens, and confirming that the data adheres to the specific requirements for the chosen model and training methodology.

The following code illustrates the usage of these loader functionalities:

# Initialize the loader, mapping your column names

loader = JSONLDatasetLoader(

question="question",

answer="answer",

)

loader.load("training_data.jsonl")

# Preview raw data

loader.show(n=3)

# Transform into Nova's expected chat template format

loader.transform(method=TrainingMethod.SFT_LORA, model=Model.NOVA_LITE_2)

# Preview transformed data to verify the structure

loader.show(n=3)

# Validate — prints "Validation completed" if successful

loader.validate(method=TrainingMethod.SFT_LORA, model=Model.NOVA_LITE_2)

train_path = loader.save(f"S3_DATA_PATH/train.jsonl")

print(f"Training data: train_path")Step 4: Configuring and Launching Training with Data Mixing

Data mixing is a core feature that, when enabled, instructs Nova Forge to dynamically blend the user’s domain-specific training data with Amazon-curated datasets during the fine-tuning process. This strategic integration is paramount in preventing the model from "forgetting" its pre-existing general knowledge and capabilities as it learns new domain-specific information.

Understanding Training Methods: LoRA vs. Full-Rank SFT

Nova Forge offers flexibility in fine-tuning approaches. This guide focuses on Supervised Fine-Tuning (SFT) with LoRA (Low-Rank Adaptation), denoted as TrainingMethod.SFT_LORA. LoRA is a parameter-efficient method that significantly reduces computational cost and training time by updating only a small subset of adapter weights, rather than the entire model’s parameters. It is generally the recommended starting point for most fine-tuning tasks due to its efficiency and effectiveness.

Alternatively, Nova Forge supports full-rank SFT. This method updates all model parameters, potentially allowing for deeper domain knowledge integration. However, it demands more computational resources and is more susceptible to "catastrophic forgetting," making data mixing even more critical. The preceding article in this series showcased the performance of full-rank SFT. Full-rank fine-tuning is advisable when LoRA does not achieve the desired domain-specific performance or when a more profound model adaptation is required.

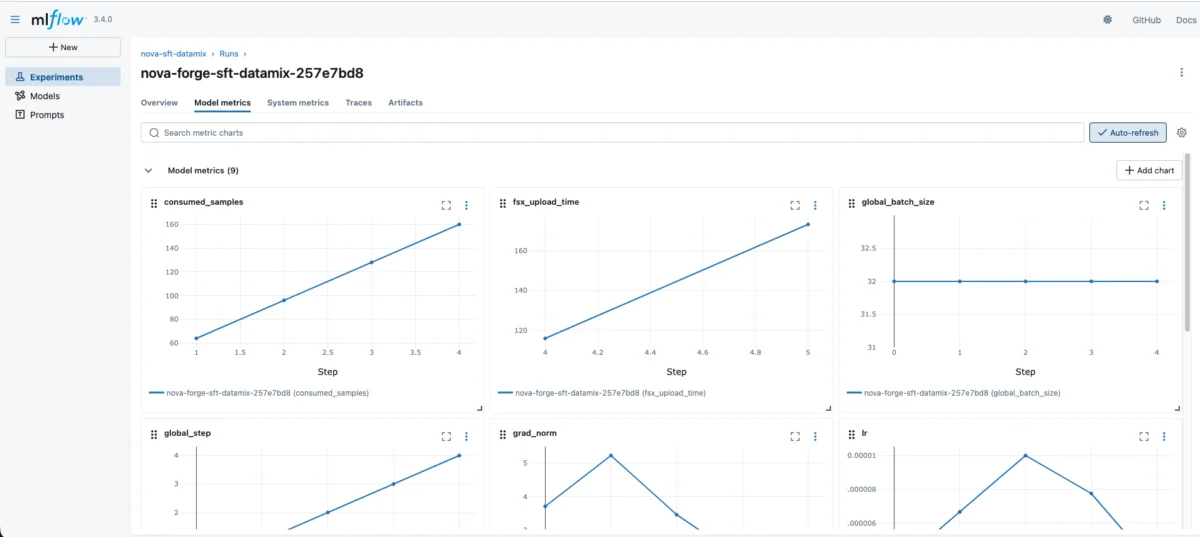

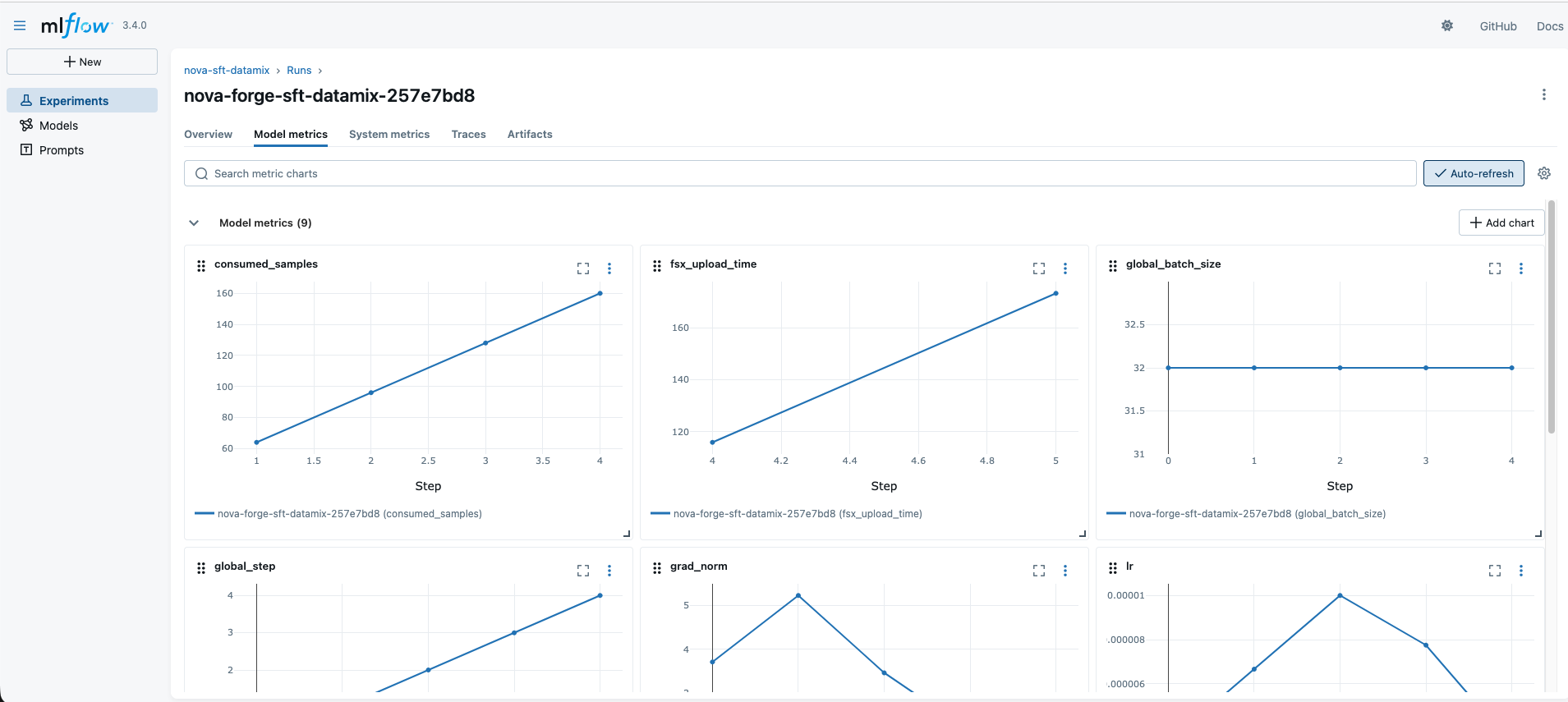

Configuring the Runtime and MLflow for Monitoring

To orchestrate the training job, a runtime environment needs to be configured. This involves specifying the compute infrastructure, including the instance type and count, as well as the namespace within the SageMaker HyperPod cluster. Additionally, integrating with MLflow is crucial for comprehensive monitoring of training progress and experiment tracking.

from amzn_nova_customization_sdk.model.model_enums import Platform

cluster_name = "nova-forge-hyperpod"

instance_type = "ml.p5.48xlarge"

instance_count = 4

namespace = "kubeflow"

runtime = SMHPRuntimeManager(

instance_type=instance_type,

instance_count=instance_count,

cluster_name=cluster_name,

namespace=namespace,

)

MLFLOW_APP_ID = "<your-mlflow-app-id>" # Example: "app-XXXXXXXXXXXX"

mlflow_app_arn = f"arn:aws:sagemaker:REGION:ACCOUNT_ID:mlflow-app/MLFLOW_APP_ID"

mlflow_monitor = MLflowMonitor(

tracking_uri=mlflow_app_arn,

experiment_name="nova-sft-datamix",

)Creating the Customizer with Data Mixing Enabled

The NovaModelCustomizer class is the central component for initiating the fine-tuning process. To enable the data mixing feature, the data_mixing_enabled parameter must be set to True during the instantiation of the customizer.

customizer = NovaModelCustomizer(

model=Model.NOVA_LITE_2,

method=TrainingMethod.SFT_LORA,

infra=runtime,

data_s3_path=f"S3_DATA_PATH/train.jsonl",

output_s3_path=f"S3_OUTPUT_PATH/",

mlflow_monitor=mlmlflow_monitor,

data_mixing_enabled=True,

)Understanding and Tuning the Data Mixing Configuration

Data mixing operates by carefully controlling the composition of training batches. The customer_data_percent parameter dictates the proportion of each batch that is derived from the user’s domain-specific data. The remaining portion is populated by Amazon-curated datasets, with individual nova_*_percent parameters governing the relative importance of each capability category within that Nova-curated segment.

For instance, the following configuration would allocate 50% of each batch to customer data, with the remaining 50% distributed among various Nova capabilities. The Nova-side percentages must collectively sum to 100%, with each value representing that category’s share of the Nova-curated portion of the batch.

# View the default mixing ratios

print(customizer.get_data_mixing_config())

# Override the default ratios based on specific priorities

customizer.set_data_mixing_config(

"customer_data_percent": 50,

"nova_agents_percent": 1,

"nova_baseline_percent": 10,

"nova_chat_percent": 0.5,

"nova_factuality_percent": 0.1,

"nova_identity_percent": 1,

"nova_long-context_percent": 1,

"nova_math_percent": 2,

"nova_rai_percent": 1,

"nova_instruction-following_percent": 13,

"nova_stem_percent": 10.5,

"nova_planning_percent": 10,

"nova_reasoning-chat_percent": 0.5,

"nova_reasoning-code_percent": 0.5,

"nova_reasoning-factuality_percent": 0.5,

"nova_reasoning-instruction-following_percent": 45,

"nova_reasoning-math_percent": 0.5,

"nova_reasoning-planning_percent": 0.5,

"nova_reasoning-rag_percent": 0.4,

"nova_reasoning-rai_percent": 0.5,

"nova_reasoning-stem_percent": 0.4,

"nova_rag_percent": 1,

"nova_translation_percent": 0.1,

)Guidance on Tuning the Data Mix:

| Parameter | What it Controls | Guidance |

|---|---|---|

customer_data_percent |

Share of domain data in each training batch. | Higher values enhance domain specialization but increase forgetting risk. 50% is a recommended starting point. |

nova_instruction-following_percent |

Weight of instruction-following examples. | Keep high if the model needs to adhere to structured prompts or output formats in production. |

nova_reasoning-*_percent |

Weights for various reasoning capabilities. | Increase if downstream tasks require multi-step reasoning (e.g., math, code, planning). |

nova_rai_percent |

Responsible AI alignment data. | Maintain a non-zero value to preserve safety behaviors and ethical considerations. |

nova_baseline_percent |

Core factual knowledge. | Crucial for retaining broad world knowledge and general factual accuracy. |

Tip: A strategic approach is to begin with the default data mixing ratios, execute a training job, and then evaluate the resulting model on both the domain-specific task and general benchmarks like MMLU. This iterative process allows for fine-tuning the mix until the optimal balance between domain expertise and general intelligence is achieved. As demonstrated in previous research, even a 75% customer-to-25% Nova data split can preserve near-baseline MMLU scores while yielding significant improvements on complex classification tasks.

Launching the Training Job

The overrides parameter allows for fine-grained control over key training hyperparameters, enabling users to tailor the training process to their specific needs.

| Parameter | Description | Guidance |

|---|---|---|

lr |

Learning rate | 1e-5 is a common and effective default for LoRA fine-tuning. |

warmup_steps |

Steps to linearly ramp up the learning rate from 0 | Typically set to 5-10% of total steps. Adjust proportionally to max_steps. |

global_batch_size |

Number of examples per gradient update | Larger batches offer more stable gradients but consume more memory. |

max_length |

Maximum sequence length in tokens | Adapt based on your data’s characteristics. 65536 supports long-context use cases; reduce for shorter data. |

max_steps |

Total number of training steps | Start with a small number (e.g., 5-10) to validate your setup. For ~23k examples and batch size 32, one epoch is ~720 steps. |

The training job is launched using the train() method of the customizer:

training_config =

"lr": 1e-5,

"warmup_steps": 2,

"global_batch_size": 32,

"max_length": 65536,

"max_steps": 5, # Start small to validate; increase for production runs

training_result = customizer.train(

job_name="nova-forge-sft-datamix",

overrides=training_config,

)

training_result.dump("training_result.json")

print("Training result saved")Monitoring Training Progress

Continuous monitoring of the training job is essential for tracking progress and identifying potential issues. This can be achieved through the SDK itself or by leveraging AWS CloudWatch.

# Check job status

print(training_result.get_job_status())

# Stream recent logs

customizer.get_logs(limit=50, start_from_head=False)

# Alternatively, use the CloudWatch monitor

monitor = CloudWatchLogMonitor.from_job_result(training_result)

monitor.show_logs(limit=10)

# Poll until completion

import time

while training_result.get_job_status()[1] == "Running":

time.sleep(60)Crucially, training metrics, including loss curves and the learning rate schedule, are also published to the configured MLflow experiment, facilitating detailed visualization and comparison across different training runs.

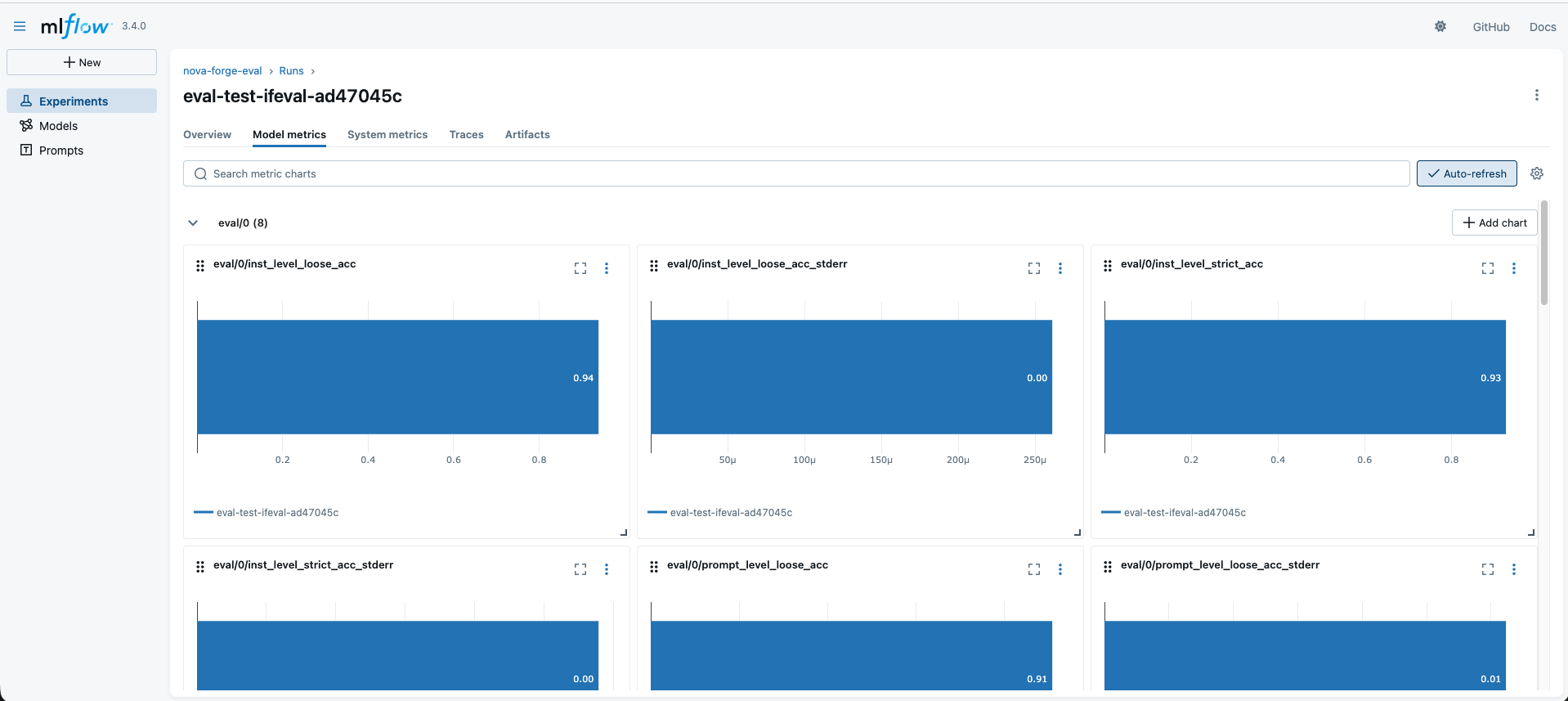

Step 5: Evaluating the Fine-Tuned Model

Evaluation is a critical phase, especially when employing data mixing, as it requires a dual assessment: verifying improvements on the domain-specific task and confirming the retention of general capabilities. Measuring only one aspect would provide an incomplete picture of the data mixing strategy’s effectiveness. Upon completion of training, the model checkpoint location can be retrieved from the output manifest.

from amzn_nova_forge.util.checkpoint_util import extract_checkpoint_path_from_job_output

checkpoint_path = extract_checkpoint_path_from_job_output(

output_s3_path=training_result.model_artifacts.output_s3_path,

job_result=training_result,

)Configuring the Evaluation Infrastructure

Evaluation typically requires a single GPU instance, a significantly lighter footprint compared to the multi-instance setup for training. The infrastructure for evaluation is configured similarly to the training runtime.

eval_infra = SMHPRuntimeManager(

instance_type=instance_type,

instance_count=1,

cluster_name=cluster_name,

namespace=namespace,

)

eval_mlflow = MLflowMonitor(

tracking_uri=mlflow_app_arn,

experiment_name="nova-forge-eval",

)

evaluator = NovaModelCustomizer(

model=Model.NOVA_LITE_2,

method=TrainingMethod.EVALUATION,

infra=eval_infra,

output_s3_path=f"s3://S3_BUCKET/demo/eval-outputs/",

mlflow_monitor=eval_mlflow,

)Running Evaluations

Nova Forge supports three complementary evaluation methodologies:

1. Public Benchmarks (Measuring General Capability Retention):

These benchmarks are instrumental in assessing whether the data mixing strategy is successfully preventing the model from losing its general knowledge. A significant drop in MMLU scores, for instance, indicates that the data mix may require more Nova data. Similarly, a decline in IFEval performance suggests an adjustment in the instruction-following weights is needed.

# MMLU — assesses broad knowledge and reasoning across 57 subjects

mmlu_result = evaluator.evaluate(

job_name="eval-mmlu",

eval_task=EvaluationTask.MMLU,

model_path=checkpoint_path,

)

# IFEval — evaluates the ability to follow structured instructions

ifeval_result = evaluator.evaluate(

job_name="eval-ifeval",

eval_task=EvaluationTask.IFEVAL,

model_path=checkpoint_path,

)2. Bring-Your-Own-Data (Measuring Domain-Specific Performance):

This approach involves using a held-out test set to quantify the performance improvements achieved on the specific domain task after fine-tuning.

byod_result = evaluator.evaluate(

job_name="eval-byod",

eval_task=EvaluationTask.GEN_QA,

data_s3_path=f"s3://S3_DATA_PATH/eval/gen_qa.jsonl", # Ensure this path points to your evaluation data

model_path=checkpoint_path,

overrides="max_new_tokens": 2048,

)3. Large Language Model (LLM) as Judge:

For domains where automated metrics fall short, another LLM can be employed to assess the quality of responses, offering a nuanced evaluation.

Checking Results and Retrieving Outputs

After the evaluation jobs complete, their status can be checked, and detailed results can be retrieved.

# Check job status for all evaluations

print(f"MMLU Job Status: mmlu_result.get_job_status()")

print(f"IFEval Job Status: ifeval_result.get_job_status()")

print(f"BYOD Job Status: byod_result.get_job_status()")

# Retrieve the S3 path containing detailed evaluation results

print(f"MMLU Evaluation Output Path: mmlu_result.eval_output_path")

print(f"IFEval Evaluation Output Path: ifeval_result.eval_output_path")

print(f"BYOD Evaluation Output Path: byod_result.eval_output_path")The evaluation output path directs users to a JSON file containing detailed metrics. Downloading and inspecting this file provides the precise scores for each evaluation. Furthermore, metrics can be published to MLflow tracking servers by specifying the tracking server URI during job creation, enabling organized storage and comparison of experimental results.

Interpreting Your Results

A structured decision framework aids in interpreting evaluation outcomes and guiding subsequent iterations:

| Observation | What it Means | What to Adjust |

|---|---|---|

| MMLU close to baseline (e.g., within 0.01–0.02) | Data mixing is successfully preventing forgetting. | Focus on optimizing domain performance. |

| MMLU significantly degraded | Model is forgetting general capabilities. | Decrease customer_data_percent or increase Nova data weights. |

| Domain task performance below expectations | Model is not learning sufficiently from data. | Increase customer_data_percent, add more training data, or increase max_steps. |

| IFEval degraded | Model is losing instruction-following ability. | Increase nova_instruction-following_percent. |

| Both MMLU and domain task improved | Ideal outcome. | Document the configuration and proceed to production deployment. |

As a benchmark, prior analyses of Amazon Nova 2 Lite on a VOC classification task reported a significant decline in general intelligence (MMLU dropping from 0.75 to 0.47) when fine-tuning solely on customer data, despite a boost in Domain F1. Conversely, a blended approach (75% customer + 25% Nova data) successfully recovered nearly all MMLU accuracy while still enhancing domain performance, illustrating the power of data mixing.

Best Practices for Effective Fine-Tuning

To maximize the effectiveness of the fine-tuning process, consider the following best practices:

- Iterative Refinement: Treat fine-tuning with data mixing as an iterative cycle. Train, evaluate on both domain and general tasks, adjust the data mix and hyperparameters, and repeat until optimal performance is achieved.

- Data Quality is Paramount: Ensure your domain-specific data is clean, representative, and accurately reflects the target use case.

- Monitor General Capabilities: Continuously track performance on general benchmarks like MMLU to prevent catastrophic forgetting.

- Leverage MLflow: Utilize MLflow for experiment tracking, enabling easy comparison of different configurations and hyperparameter settings.

- Start Small: Begin with smaller training runs (

max_steps) to validate your setup and configurations before committing to longer, more resource-intensive training sessions.

Cleaning Up Resources

To prevent incurring ongoing charges after completing this walkthrough, it is essential to clean up the resources that were provisioned. This typically involves deleting the S3 bucket created for data storage and model outputs.

# Delete the S3 bucket and its contents

s3.delete_bucket(Bucket=S3_BUCKET)

print(f"S3 bucket 'S3_BUCKET' deleted.")Conclusion

This comprehensive guide has detailed the end-to-end workflow for fine-tuning Amazon Nova models using the Nova Forge SDK, with a particular emphasis on the transformative capabilities of data mixing. The SDK streamlines critical aspects such as data preparation, distributed training orchestration on SageMaker HyperPod, and multi-dimensional evaluation, allowing users to concentrate on their domain expertise and data.

Data mixing is instrumental in making fine-tuning a practical and robust solution for production environments, enabling models to possess both specialized domain knowledge and broad general intelligence. The process is best approached iteratively: train, evaluate across multiple dimensions, fine-tune the data mix and hyperparameters, and repeat until the desired balance for the specific use case is attained.

For in-depth documentation and further exploration of the Nova Forge SDK’s capabilities, users are encouraged to consult the Nova Forge Developer Guide and the Nova Forge SDK repository. By mastering these techniques, organizations can unlock the full potential of customized large language models for their unique AI challenges.